Node.js is more than just "JavaScript on the server." It's a carefully assembled runtime built on top of battle-tested components that make non-blocking I/O possible. This post breaks down how those components fit together, what they actually do, and why the design choices matter.

Imagine a restaurant with a single waiter. A traditional, multi-threaded server is like having one waiter per table, where each waiter stands next to their table waiting for the food to arrive, doing nothing else. That works, but it gets expensive fast. You need 100 tables? Hire 100 waiters. Node.js is different. It's the restaurant where one waiter takes your order, hands it to the kitchen, and immediately walks over to take someone else's order. When your food is ready, the kitchen rings a bell, and the waiter brings it to you. One waiter. Many tables. No one standing around doing nothing.

That waiter is the event loop. But before we understand the event loop, we need to understand what Node.js is actually made of.

Node.js was created by Ryan Dahl and first announced at JSConf EU in November 2009. The demo was unlike anything the web development community had seen before: a tiny HTTP server, fully non-blocking, written in JavaScript, handling thousands of concurrent connections with ease. The audience reaction was somewhere between bewilderment and excitement.

But why build this at all? The answer is a specific, concrete problem that plagued web servers at the time.

In the late 1990s, a software engineer named Dan Kegel wrote an essay about what he called the "C10K problem": how do you handle 10,000 concurrent connections on a single server? The dominant model at the time was Apache HTTP Server, which spawned a new thread (or process) for each incoming request. That worked fine for hundreds of connections. For thousands, it fell apart. Threads are expensive. Each one consumes memory (typically 1-8MB of stack space), and the OS spends significant time context-switching between them. 10,000 threads meant the server spent more time juggling threads than actually serving requests.

Ryan Dahl watched this problem play out specifically with the way popular web frameworks handled I/O. He wrote a famous blog post pointing to a specific issue with Flickr's file upload progress bar: the server had to spawn a separate process just to know how far along a file upload was, because the standard approach to I/O was synchronous and blocking. The server was literally doing nothing productive while waiting for data to come in from a socket.

His insight was that the combination of JavaScript's single-threaded execution model (no shared-state threading problems), and non-blocking I/O via an event loop, was exactly the right tool for building high-concurrency servers. JavaScript had already been designed around callbacks and events because of its browser roots. The language fit the model perfectly.

Node.js grew quickly. In 2010, Isaac Schlueter created npm (Node Package Manager), which became the central registry for the growing ecosystem. By 2011, Node.js had its first Windows release, significantly broadening its reach. By 2013, major companies including LinkedIn, Walmart, and PayPal had moved significant parts of their infrastructure to Node.js, with public reports of dramatic improvements in concurrency and resource usage.

In late 2014, a group of core contributors frustrated with the slow release cadence under Joyent's stewardship forked the project into io.js. The fork moved faster, adopted newer V8 versions, and introduced ES6 features sooner. It was a significant moment: for a while, the Node.js ecosystem had two competing runtimes.

The split was short-lived. In 2015, io.js and Node.js merged back under the newly formed Node.js Foundation (later merged into the OpenJS Foundation). Node.js v4 was the first merged release, combining the best of both efforts. The Foundation model brought faster releases, long-term support (LTS) schedules, and a more open governance structure.

Today, Node.js releases a new major version every six months, with even-numbered releases receiving LTS status for three years. The project is maintained by a large team of contributors from across the industry.

Timeline: 2009 ── Ryan Dahl announces Node.js at JSConf EU 2010 ── npm created by Isaac Schlueter 2011 ── First Windows release 2012 ── Ryan Dahl steps back; Isaac Schlueter takes over 2014 ── io.js fork begins 2015 ── io.js and Node.js merge; Node.js Foundation formed (Node v4) 2018 ── Node.js Foundation merges with JS Foundation → OpenJS Foundation 2020 ── Node.js 14 LTS released; V8 8.x, full async stack traces 2022 ── Node.js 18 LTS; experimental fetch API built in 2023 ── Node.js 20 LTS; stable test runner, permission model 2024 ── Node.js 22 LTS; require() for ES modules, WebSocket client built in

Knowing this history matters because Node.js's design choices aren't arbitrary. Every aspect of the architecture traces back to that original goal: maximum I/O concurrency on a single thread, without the complexity of multi-threaded synchronization.

Most people describe Node.js as "JavaScript on the server," which is technically true but wildly incomplete. Node.js is a runtime environment, not a language. It takes JavaScript, which was originally designed to run only in browsers, and makes it capable of running on any operating system, interacting with the file system, making network requests, spawning child processes, and a lot more.

But JavaScript alone can't do any of that. JavaScript is a scripting language with no concept of a file system, a socket, or a thread. So how does Node.js bridge that gap?

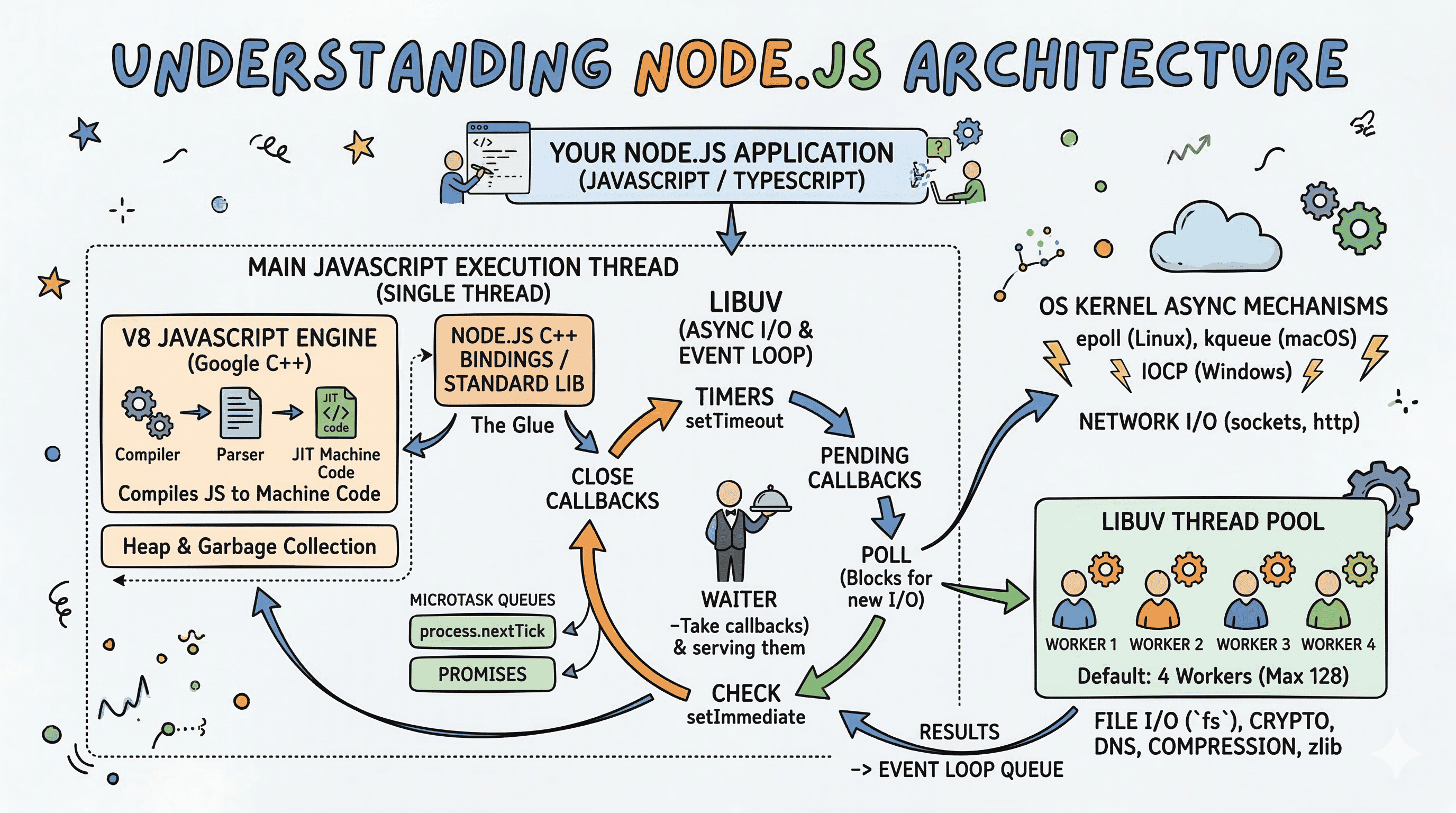

The answer is its architecture: three major components working together.

┌──────────────────────────────────────────────────────┐ │ Your Node.js App │ │ (JavaScript / TypeScript) │ └──────────────────────────┬───────────────────────────┘ │ ┌──────────────────────────▼───────────────────────────┐ │ Node.js Core │ │ (Node Bindings / Node Standard Library) │ │ Written in C++ │ └────────────┬─────────────────────────────┬───────────┘ │ │ ┌────────────▼──────────┐ ┌─────────────▼───────────┐ │ V8 │ │ libuv │ │ JavaScript Engine │ │ Async I/O & Event Loop│ │ (Compiles JS to │ │ (C library by libuv │ │ machine code) │ │ contributors) │ └───────────────────────┘ └─────────────────────────┘

Three layers. Let's go through each one properly.

V8 is the JavaScript engine that powers both Google Chrome and Node.js. It was built by Google and is written in C++. When you write const x = 5 + 3, something needs to take that text, understand it, and actually run it on your CPU. That something is V8.

Think of V8 like a translator at a UN conference. Your JavaScript is the speech in one language. The CPU only understands machine code. V8 sits in the middle and translates everything in real time, and it does it fast.

V8 doesn't just interpret your code line by line. That would be too slow. Instead, it goes through a multi-stage pipeline:

Your JavaScript Source Code │ ▼ ┌─────────────────────┐ │ Parser │ Reads raw JS text, checks syntax └────────┬────────────┘ │ AST (Abstract Syntax Tree) ▼ ┌─────────────────────┐ │ Ignition │ Interpreter: converts AST to Bytecode │ (Interpreter) │ Runs the bytecode immediately └────────┬────────────┘ │ Profiling data (which code runs hot?) ▼ ┌─────────────────────┐ │ TurboFan │ JIT Compiler: optimizes hot code │ (JIT Compiler) │ to native machine code └────────┬────────────┘ │ ▼ Machine Code (runs directly on CPU)

Here's the key insight: V8 doesn't compile everything upfront. It starts by interpreting your code (Ignition), which is fast to start but slower to execute. As it runs, it identifies "hot" functions, ones called frequently, and passes them to TurboFan, the JIT (Just-In-Time) compiler, which produces optimized machine code for them.

This is why you'll often hear that Node.js performance improves after a warm-up period. Cold start, V8 is interpreting. After a while, hot paths get compiled to native code and things get much faster.

Here's something intermediate and advanced devs need to know. V8 makes assumptions when optimizing code. If you write a function that always receives a number, TurboFan will compile a highly optimized version for numbers. But if you then pass a string to that same function, V8 has to "deoptimize" back to the interpreter. This is called a deoptimization bail-out.

javascriptfunction add(a, b) { return a + b; } // V8 sees numbers repeatedly - TurboFan optimizes for numbers add(1, 2); add(3, 4); add(5, 6); // Now you pass a string - V8 has to bail out and deoptimize add("hello", "world");

The practical takeaway: keep your functions type-consistent. Mixing types in hot paths hurts performance. This is one of the biggest hidden wins TypeScript gives you at the application level, not just for readability, but as a nudge toward consistent typing.

V8 also manages your memory. It has its own heap (the region of memory where objects live) and a garbage collector called Orinoco. The garbage collector runs in the background, finding objects with no remaining references and freeing their memory.

The heap is split into multiple generations:

┌──────────────────────────────────────────────────────┐ │ V8 Heap │ │ │ │ ┌────────────────────┐ ┌────────────────────────┐ │ │ │ New Space │ │ Old Space │ │ │ │ (Young Gen) │ │ (Old Gen) │ │ │ │ │ │ │ │ │ │ Short-lived objs │ │ Objects that survived │ │ │ │ collected fast │ │ GC in young gen │ │ │ │ (Minor GC) │ │ (Major GC) │ │ │ └────────────────────┘ └────────────────────────┘ │ │ │ │ ┌────────────────────┐ ┌────────────────────────┐ │ │ │ Code Space │ │ Large Object Space │ │ │ │ (Compiled code) │ │ (Objects > 256KB) │ │ │ └────────────────────┘ └────────────────────────┘ │ └──────────────────────────────────────────────────────┘

Most objects are short-lived. V8 knows this and runs a fast minor GC on the New Space frequently. Objects that survive a couple of minor GCs get promoted to Old Space, which is collected less frequently but takes longer when it does run.

This matters for performance: creating millions of short-lived objects is actually fine. Creating objects that accumulate in Old Space and never get collected is where memory leaks happen.

libuv is a C library originally written for Node.js but now used by other projects too. It's the component responsible for all asynchronous I/O in Node.js, and it's where the event loop actually lives.

Here's the thing most people misunderstand: the event loop is not part of V8. V8 handles JavaScript execution. libuv handles everything else: file system operations, network I/O, DNS lookups, child processes, and the event loop that orchestrates all of it.

Think of it like a kitchen coordinator in a restaurant. V8 is the chef who actually cooks (executes JavaScript). libuv is the coordinator who manages all the timers, receives orders from different tables, delegates to the right station, and tells the chef when something is ready to be plated.

Operating systems have native async capabilities (epoll on Linux, kqueue on macOS, IOCP on Windows). But the APIs are completely different across platforms. If Node.js had to write separate code for each OS's async system, it would be an enormous maintenance burden and still might not work consistently.

libuv abstracts all of that. It provides one consistent API for async I/O, and underneath, it talks to whatever the OS supports natively.

Your Node.js App │ │ fs.readFile(), net.connect(), etc. ▼ libuv API (consistent, platform-agnostic) │ ├──── Linux ──────► epoll │ ├──── macOS ──────► kqueue │ └──── Windows ────► IOCP (I/O Completion Ports)

Here's a fact that surprises a lot of people: Node.js is not entirely single-threaded.

Your JavaScript runs on a single thread. The event loop runs on a single thread. But libuv maintains a pool of worker threads, by default 4, for operations that can't be done asynchronously by the OS.

Which operations use the thread pool? More than you'd think:

fs.readFile, fs.writeFile, etc.)dns.lookup, but NOT dns.resolve)crypto.pbkdf2, crypto.scrypt)zlib compression/decompressionWhen you call fs.readFile, Node doesn't magically do it on one thread. It offloads the work to one of libuv's thread pool workers. That worker does the blocking I/O, and when it's done, it pushes the result into the event loop's callback queue.

┌─────────────────────────────────────────────────────────┐ │ Main Thread │ │ (JavaScript + Event Loop) │ │ │ │ fs.readFile("big.json", callback) ──────────────────► │ │ libuv dispatches │ └──────────────────────────────┬──────────────────────────┘ │ ▼ ┌──────────────────────────────────┐ │ libuv Thread Pool │ │ (Default: 4 threads) │ │ │ │ Thread 1: reading big.json ◄── │ │ Thread 2: idle │ │ Thread 3: idle │ │ Thread 4: idle │ └──────────────┬───────────────────┘ │ Done! Push result to event queue ▼ ┌──────────────────────────────────┐ │ Event Loop Queue │ │ [callback with file data] │ └──────────────┬───────────────────┘ │ ▼ Main thread picks up the callback and runs it

This is why doing many parallel fs.readFile calls beyond 4 will see the 5th request wait for a thread to free up. You can increase the thread pool size with the UV_THREADPOOL_SIZE environment variable, up to 128, but there are trade-offs (more threads means more OS context switching).

bashUV_THREADPOOL_SIZE=16 node server.js

Network I/O (like HTTP requests and TCP connections) is the exception. Those are handled by the OS's native async mechanisms directly (epoll, kqueue, IOCP) and don't use the thread pool at all. This is why Node.js is particularly good at handling many concurrent network connections. Thousands of simultaneous HTTP connections? The OS handles the async part natively. No thread pool bottleneck.

The default thread pool size of 4 is a common source of latency spikes that are hard to diagnose. Consider this scenario: you have an HTTP server that, for every request, reads a file from disk. Under low load, everything feels instant. Under moderate load (say, 20 concurrent requests), 4 of them get thread pool workers immediately, while the other 16 queue up. The queued requests don't fail, they just wait silently. Your response times spike without any obvious error in your logs.

You can observe thread pool saturation with a simple test. Run four blocking operations (like crypto.pbkdf2) in parallel and time a fifth:

javascriptLoading syntax highlighter...

With the default pool size of 4, the output will look something like:

Operation 1 done in ~1000ms Operation 2 done in ~1000ms Operation 3 done in ~1100ms Operation 4 done in ~1100ms Operation 5 done in ~2000ms ← had to wait for a thread to free up

Operation 5 takes roughly twice as long because it queued behind the first four. With UV_THREADPOOL_SIZE=5, all five complete in ~1000ms.

The right thread pool size depends on your workload. A good starting point:

This is worth being explicit about, because mixing them up leads to wrong mental models:

| Operation | Uses Thread Pool? |

|---|---|

fs.readFile, fs.writeFile, fs.stat | Yes |

dns.lookup | Yes |

dns.resolve, dns.resolve4 | No (uses OS async DNS) |

net.connect, TCP/HTTP sockets | No (epoll/kqueue/IOCP) |

crypto.pbkdf2, crypto.scrypt | Yes |

crypto.randomBytes (small) | Yes |

zlib.gzip, zlib.deflate | Yes |

http.request | No |

child_process.spawn | No (OS process management) |

| Custom N-API addons | Depends on implementation |

The pattern: anything that requires a kernel call that blocks waiting for data goes through the thread pool. Pure network I/O (where the kernel notifies you when data arrives) does not.

The event loop is the heart of Node.js. It's what lets a single thread handle thousands of concurrent operations. But "event loop" is often explained in hand-wavy terms. Let's be precise.

The event loop is a loop. It runs phases. Each phase has a FIFO queue of callbacks to execute. When a phase's queue is empty (or it hits its max callback limit), the event loop moves to the next phase.

┌──────────────────────────────┐ ┌───►│ timers │ setTimeout, setInterval callbacks │ └──────────────┬───────────────┘ │ │ │ ┌──────────────▼───────────────┐ │ │ pending callbacks │ I/O errors deferred from prev cycle │ └──────────────┬───────────────┘ │ │ │ ┌──────────────▼───────────────┐ │ │ idle, prepare │ Internal use only (libuv internals) │ └──────────────┬───────────────┘ │ │ │ ┌──────────────▼───────────────┐ │ │ poll │ ◄── Fetch new I/O events │ │ │ Blocks here if queue is empty │ └──────────────┬───────────────┘ and no timers are pending │ │ │ ┌──────────────▼───────────────┐ │ │ check │ setImmediate callbacks │ └──────────────┬───────────────┘ │ │ │ ┌──────────────▼───────────────┐ └────┤ close callbacks │ socket.on('close', ...) etc. └──────────────────────────────┘

Timers phase: Runs callbacks scheduled by setTimeout and setInterval whose delay has expired. Note: the delay is a minimum, not a guarantee. If the event loop is busy, your timer fires late.

Pending callbacks phase: Executes I/O callbacks that were deferred from the previous loop iteration, mostly error callbacks from network operations.

Idle, prepare phase: Used internally by libuv. You'll never interact with this directly.

Poll phase: This is the most important one. It does two things:

If the poll queue is empty, the event loop checks for setImmediate callbacks. If those exist, it moves on. If not, it waits here for I/O callbacks to arrive (up to a timeout based on pending timers).

Check phase: Runs setImmediate callbacks. setImmediate always fires before any timers on the next loop iteration, even if the timer delay is 0.

Close callbacks phase: Fires close event callbacks, like when a socket or handle is abruptly closed.

Here's where things get subtle. Between each phase transition (and also between callbacks within a phase in newer versions of Node.js), the event loop processes two special queues:

process.nextTick() queue.then(), async/await resolutions)And process.nextTick drains before the Promise microtask queue.

┌────────────────────────────────────────────────┐ │ Between Every Phase Transition │ │ │ │ 1. Drain process.nextTick queue │ │ (all of them, recursively if needed) │ │ │ │ 2. Drain Promise microtask queue │ │ (.then callbacks, await resolutions) │ │ │ │ 3. Move to next event loop phase │ └────────────────────────────────────────────────┘

This leads to one of the most common interview gotchas in Node.js:

javascriptconsole.log("1: synchronous"); setTimeout(() => console.log("2: setTimeout"), 0); setImmediate(() => console.log("3: setImmediate")); Promise.resolve().then(() => console.log("4: promise")); process.nextTick(() => console.log("5: nextTick")); console.log("6: synchronous");

Output:

1: synchronous 6: synchronous 5: nextTick 4: promise 2: setTimeout (or setImmediate, order between these two can vary in some contexts) 3: setImmediate

Synchronous code runs first. Then microtasks: nextTick before promises. Then the timer/check phases.

One thing to be careful about: process.nextTick recursion. If a nextTick callback schedules another nextTick, the event loop will keep processing them before moving on. You can starve I/O this way:

javascript// This will block the event loop forever function recurse() { process.nextTick(recurse); } recurse(); // Nothing else will ever run

This is why the Node.js docs say setImmediate is usually preferred over process.nextTick for most use cases.

The event loop runs continuously, but its iteration time is not fixed. If a callback takes 200ms to complete, every other callback that was waiting has to wait at least 200ms longer. This accumulated delay is called event loop lag, and it's the primary health metric for any Node.js server.

You can measure it manually by scheduling a callback and checking how long it actually took to run versus when it was scheduled:

javascriptLoading syntax highlighter...

Node.js 16+ also exposes performance.eventLoopUtilization(), which gives you a more precise utilization ratio (0 = idle, 1 = fully saturated):

javascriptconst { performance } = require("perf_hooks"); const elu1 = performance.eventLoopUtilization(); setTimeout(() => { const elu2 = performance.eventLoopUtilization(elu1); console.log( `Event loop utilization: ${(elu2.utilization * 100).toFixed(1)}%`, ); }, 5000);

High utilization (above 80%) consistently is a signal that you either need to offload CPU work to worker threads, or you have callbacks that are taking too long to complete.

When the event loop reaches the poll phase with an empty queue, it doesn't just spin busy. It calculates a timeout and tells the OS "wake me up when something happens, or after this many milliseconds." This is the blocking I/O wait.

How does it calculate the timeout?

setImmediate callbacks pending: timeout is 0 (don't block, go check them)This is the mechanism that lets a Node.js process sleep efficiently when it has nothing to do. An idle HTTP server sitting at the poll phase isn't spinning the CPU. It's parked waiting for the OS to deliver a network event.

Poll Phase Decision Logic: Is check queue non-empty? ──► Yes ── Set timeout = 0, move to check │ No │ Are timers pending? ──────── Yes ── Set timeout = time until next timer │ No │ Block indefinitely until OS delivers an I/O event

Understanding this explains why a Node.js process exits when there's nothing left to do: once there are no pending I/O handles, no timers, and no work in any queue, the event loop has nothing to wait for and exits cleanly.

The single-threaded nature of JavaScript execution means any synchronous operation that takes a long time directly delays every other pending callback. Common blocking culprits:

javascriptLoading syntax highlighter...

The fix is the same in all cases: move the work off the main thread. For I/O, use the async API. For CPU work, use worker_threads. For large JSON, consider streaming parsers like stream-json. For regex, validate and limit input length at the boundary before it gets near a complex pattern.

So V8 runs JavaScript. libuv handles async I/O. But how does JavaScript code call into libuv? That's the job of Node.js bindings, sometimes called Node.js core or Node.js built-ins.

These are C++ files that expose libuv and OS-level functionality to JavaScript. When you write fs.readFile() in JavaScript, you're calling a thin JavaScript wrapper around a C++ binding that calls into libuv.

Your JavaScript Code │ │ require('fs').readFile(...) ▼ ┌──────────────────────────────┐ │ Node.js JS Standard Lib │ lib/fs.js (thin JS layer) │ (JavaScript wrappers) │ └──────────────┬───────────────┘ │ calls into ▼ ┌──────────────────────────────┐ │ Node.js C++ Bindings │ src/node_file.cc │ (process.binding('fs')) │ └──────────────┬───────────────┘ │ calls into ▼ ┌──────────────────────────────┐ │ libuv │ uv_fs_read(), uv_fs_open()... │ (async I/O operations) │ └──────────────────────────────┘

V8 provides a C++ API that lets you create JavaScript objects, functions, and values from C++ code. Node.js bindings use this API to create JavaScript objects that wrap C++ functionality.

When you do require('fs'), Node.js loads lib/fs.js, which at the top does something like:

javascriptconst binding = process.binding("fs");

process.binding is a low-level function that loads a built-in C++ module. The C++ module (node_file.cc) initializes itself by creating JavaScript functions that point to C++ functions using V8's API.

Here's a simplified mental model of what that looks like in C++:

cppLoading syntax highlighter...

On the JavaScript side, this just looks like a normal object with methods. The binding layer is what makes JavaScript feel like it has native superpowers.

Beyond core bindings, Node.js supports native addons: C++ modules that you can write yourself and require like any other module. This is how libraries like sharp (image processing), bcrypt, and node-canvas achieve performance that pure JavaScript can't match.

The modern way to write native addons is through N-API (also called node-api), which provides a stable C API that doesn't break between Node.js versions. Before N-API, addons had to be recompiled every time V8's internal API changed (which happened constantly). N-API abstracts V8's internals so your addon works across major Node.js releases.

Native Addon (your-addon.node) │ │ Uses N-API ▼ ┌────────────────────────────────────────────────┐ │ N-API Layer │ │ (Stable C API, version-independent) │ └────────────────────────┬───────────────────────┘ │ ▼ ┌────────────────────────────────────────────────┐ │ V8 Internals │ │ (Changes between Node.js versions, but │ │ N-API shields your addon from this) │ └────────────────────────────────────────────────┘

If you've ever seen a .node file or run node-gyp build, that's a native addon being compiled. The .node file is a dynamic library (like a .dll or .so) that Node.js loads at runtime.

Here's the complete picture from node server.js to handling your first request:

$ node server.js │ ▼ ┌─────────────────────────────────────────────────────────────┐ │ 1. Node.js bootstrapping │ │ - Initializes V8 (heap, context, etc.) │ │ - Initializes libuv (event loop, thread pool) │ │ - Sets up core bindings (fs, net, http, etc.) │ └──────────────────────────────┬──────────────────────────────┘ │ ▼ ┌─────────────────────────────────────────────────────────────┐ │ 2. Loads and executes your JavaScript (V8) │ │ - Reads server.js from disk │ │ - Parses → Ignition bytecode → TurboFan (hot paths) │ │ - Registers event handlers, sets up HTTP server │ └──────────────────────────────┬──────────────────────────────┘ │ ▼ ┌─────────────────────────────────────────────────────────────┐ │ 3. Event loop starts (libuv) │ │ - Poll phase waits for I/O events │ │ - OS notifies libuv when a TCP connection arrives │ └──────────────────────────────┬──────────────────────────────┘ │ HTTP request arrives ▼ ┌─────────────────────────────────────────────────────────────┐ │ 4. libuv pushes callback into event loop queue │ │ - Main thread wakes up from poll │ │ - Runs your request handler function (V8) │ └──────────────────────────────┬──────────────────────────────┘ │ Handler calls fs.readFile() ▼ ┌─────────────────────────────────────────────────────────────┐ │ 5. C++ binding dispatches to libuv thread pool │ │ - Main thread returns to event loop (non-blocking) │ │ - Thread pool worker reads the file from disk │ └──────────────────────────────┬──────────────────────────────┘ │ File read complete ▼ ┌─────────────────────────────────────────────────────────────┐ │ 6. Result pushed to event loop queue │ │ - Main thread runs your readFile callback │ │ - Sends HTTP response │ └─────────────────────────────────────────────────────────────┘

This whole flow, from request to response, happens on one JavaScript thread, with OS-level async and libuv's thread pool handling the actual I/O.

Partially true. Your JavaScript code and the event loop run on one thread. But libuv's thread pool adds 4+ threads. The OS's network I/O is handled by the kernel (not your process's threads). Worker threads (via the worker_threads module) let you run actual JavaScript in separate threads too.

More accurate: the JavaScript execution model is single-threaded. The runtime is not.

Also partially true. The problem isn't Node.js specifically. It's that blocking the event loop with CPU-intensive JS code prevents anything else from running. If a request triggers a complex computation that takes 2 seconds of pure CPU work, the event loop is stuck for 2 seconds.

The solutions: Worker threads for CPU-intensive JS, child processes, or offloading to a separate service. Node.js is just fine for CPU work as long as you don't block the event loop doing it.

Not always. Async adds overhead: scheduling callbacks, going through the event loop, potentially going through the thread pool. For tiny, fast operations, synchronous code can actually be faster. The benefit of async is concurrency, not raw speed. You're trading latency for throughput.

No. setTimeout(fn, 0) schedules the callback in the timers phase of the next event loop iteration. Before it runs, the current synchronous code finishes, then process.nextTick queue drains, then the Promise microtask queue drains, then the timer phase runs.

Node.js 12+ ships worker_threads as a stable API. It lets you run JavaScript in parallel, on actual OS threads. Unlike child_process, worker threads share memory with the parent (via SharedArrayBuffer), which makes data transfer much cheaper.

javascriptLoading syntax highlighter...

The key difference from child processes: no serialization overhead for shared data. Workers and the main thread can read from a SharedArrayBuffer without copying. This is relevant for scenarios like image processing, data transformation, or running ML inference.

Just know that worker threads have their own V8 instance and their own event loop. They're not cheap to spawn. Use a worker pool (like piscina) instead of spawning workers on demand.

Worker threads handle CPU-intensive work within a single process. The cluster module takes a different approach: it forks the entire Node.js process across CPU cores, giving each fork its own event loop, V8 heap, and memory space.

javascriptLoading syntax highlighter...

The primary process distributes incoming connections across workers using round-robin on Linux and macOS, and delegates to the OS on Windows. Each worker process independently accepts connections on the same port.

The trade-off versus worker threads: cluster workers are fully isolated. No shared memory, no SharedArrayBuffer. That isolation makes them simpler to reason about, but any shared state (sessions, counters, caches) has to live outside the process in something like Redis. In production, most teams use PM2 or a container orchestrator to handle clustering automatically rather than managing it in application code.

Node.js is an architecture, not just a runtime. Here's what you should walk away knowing:

V8 is Google's JavaScript engine. It parses, interprets via Ignition, and JIT-compiles hot code via TurboFan. It manages your heap and runs garbage collection. It can deoptimize when type assumptions break, which is why type-consistent code performs better.

libuv is the async I/O backbone. It owns the event loop. It has a thread pool (default 4 workers) for operations the OS can't do asynchronously. Network I/O bypasses the thread pool and goes directly through OS-native async (epoll, kqueue, IOCP). This is why Node.js handles network concurrency so well.

Node.js bindings are the C++ glue that connects JavaScript land to libuv. fs.readFile is JavaScript calling C++ calling libuv. Native addons extend this further, and N-API keeps them stable across versions.

The event loop has distinct phases. Microtasks (nextTick, then Promises) drain between every phase transition. The poll phase is where the loop waits for I/O. Understanding this order is the difference between writing correct async code and writing code that looks right but behaves in surprising ways.

Node.js is not magic. It's a smart assembly of well-designed components solving a specific problem: handling I/O-bound concurrency on a single JavaScript thread. When you stay in that lane, Node.js is exceptional. When you throw long-running CPU work at it without worker threads, you're fighting its design.

Know the architecture, and you'll never have to guess why your code behaves the way it does.

Related posts based on tags, category, and projects

Node.js runs JavaScript on a single thread, yet handles thousands of concurrent requests without breaking a sweat. This post explains how that's actually possible, what the event loop does, and where the real work gets delegated.

Node.js isn't fast because of raw processing power. It's fast because it never waits around when there's work to do. This post covers the architectural decisions that make Node.js well-suited for high-concurrency web applications.

The event loop is what lets Node.js handle thousands of concurrent operations on a single thread. This post builds that mental model from scratch, covering the call stack, task queue, and how async operations move through the system.

Ever wondered what happens when you hit "run" on your JavaScript code? Let's break down how modern JavaScript engines transform your code into lightning-fast machine instructions, and why understanding this matters for writing better code.

const crypto = require("crypto");

const start = Date.now();

// Launch 5 pbkdf2 operations simultaneously

// Default thread pool has 4 workers, so the 5th one will have to wait

for (let i = 0; i < 5; i++) {

crypto.pbkdf2("password", "salt", 100000, 64, "sha512", (err, key) => {

console.log(`Operation ${i + 1} done in ${Date.now() - start}ms`);

});

}function measureEventLoopLag(callback) {

const start = process.hrtime.bigint();

setImmediate(() => {

const lag = Number(process.hrtime.bigint() - start) / 1e6; // in ms

callback(lag);

});

}

// Check every second

setInterval(() => {

measureEventLoopLag((lag) => {

if (lag > 100) {

console.warn(

`Event loop lag: ${lag.toFixed(2)}ms — something is blocking`,

);

}

});

}, 1000);// 1. Synchronous file operations — blocks while disk is read

const data = fs.readFileSync("huge-file.json"); // BLOCKS

// 2. JSON parsing of large payloads — pure CPU work

const parsed = JSON.parse(massiveJsonString); // Can take tens of milliseconds

// 3. Regex on untrusted input — catastrophic backtracking risk

const match = /^(a+)+$/.test(userInput); // ReDoS vulnerability

// 4. Tight loops doing computation

for (let i = 0; i < 10_000_000; i++) {

/* ... */

} // BLOCKS// Simplified version of what Node.js does internally

void Initialize(Local<Object> exports, Local<Value>, Local<Context> context) {

Environment* env = Environment::GetCurrent(context);

// Creates a JavaScript function called "open" that calls the C++ Open function

env->SetMethod(exports, "open", Open);

env->SetMethod(exports, "read", Read);

env->SetMethod(exports, "close", Close);

}const { Worker, isMainThread, parentPort } = require("worker_threads");

if (isMainThread) {

// This runs on the main thread

const worker = new Worker(__filename);

worker.on("message", (result) => {

console.log("Computed:", result);

});

worker.postMessage({ task: "heavyComputation" });

} else {

// This runs in the worker thread

parentPort.on("message", (data) => {

// Simulate heavy CPU work

let result = 0;

for (let i = 0; i < 1_000_000_000; i++) result += i;

parentPort.postMessage(result);

});

}const cluster = require("cluster");

const http = require("http");

const os = require("os");

if (cluster.isPrimary) {

const cpuCount = os.cpus().length;

for (let i = 0; i < cpuCount; i++) {

cluster.fork();

}

cluster.on("exit", (worker) => {

console.log(`Worker ${worker.process.pid} died, restarting`);

cluster.fork(); // auto-restart crashed workers

});

} else {

http

.createServer((req, res) => {

res.end(`Handled by worker ${process.pid}`);

})

.listen(3000);

}