Node.js runs JavaScript on a single thread, yet handles thousands of concurrent requests without breaking a sweat. This post explains how that's actually possible, what the event loop does, and where the real work gets delegated.

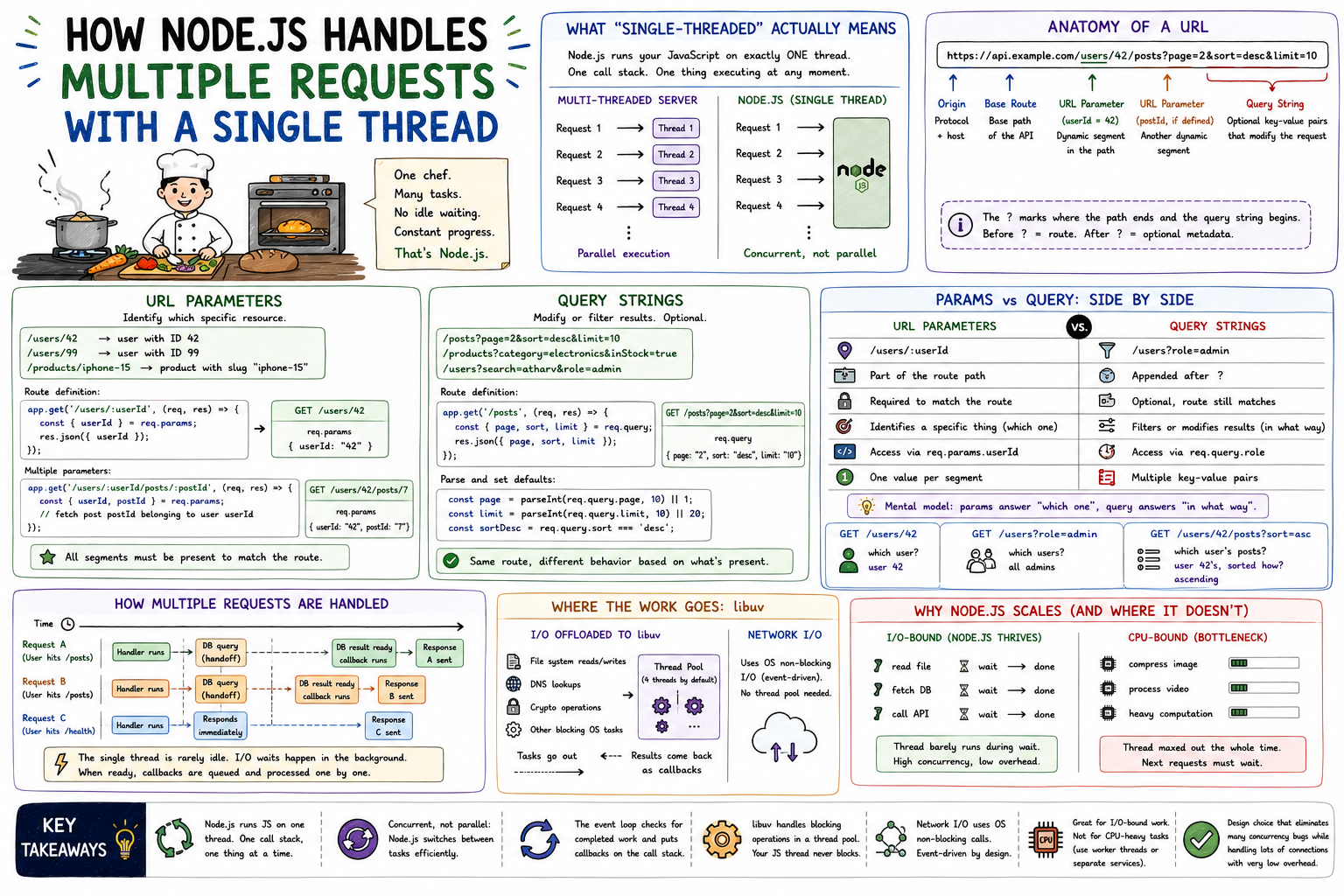

Picture a chef at a small restaurant. There's only one of them. But they're not stuck waiting at the stove staring at a pot until it boils. They start the broth, then go chop vegetables, then check on the bread in the oven, then plate a dish that finished earlier. Nothing gets their full attention every second, but everything gets handled. That's Node.js. One thread. Constant progress. No idle waiting.

A thread is the smallest unit of execution a CPU can schedule. Most languages spawn multiple threads to handle concurrent work: one thread per request, for example. If ten requests arrive at a Java server simultaneously, ten threads spin up and each handles one request in parallel.

Node.js takes a different bet. It runs your JavaScript on exactly one thread. One call stack. One piece of JavaScript executing at any given moment.

Multi-threaded server: Node.js: Request 1 ──► Thread 1 Request 1 ──┐ Request 2 ──► Thread 2 Request 2 ──┤ Request 3 ──► Thread 3 Request 3 ──┤──► Single Thread Request 4 ──► Thread 4 Request 4 ──┘ Parallel execution Concurrent, not parallel

The key word is concurrent, not parallel. Parallel means doing multiple things at the same time. Concurrent means managing multiple things at once by switching attention between them efficiently. Node.js is concurrent. The difference matters.

The event loop is what makes this work. It's a continuous loop that checks: "Is there any pending work? Any callbacks ready to run? Any I/O that finished?" When something is ready, it pulls it in and runs it.

┌────────────────────────────────────────┐ │ Event Loop │ │ │ │ ┌──────────────┐ ┌─────────────┐ │ │ │ timers │ │ I/O events │ │ │ │(setTimeout │ │(file reads, │ │ │ │ setInterval) │ │network, db) │ │ │ └────┬─────────┘ └──────┬──────┘ │ │ │ │ │ │ └───────┬──────────────┘ │ │ ▼ │ │ ┌───────────────┐ │ │ │ Call Stack │ │ │ │ (your code) │ │ │ └───────────────┘ │ └────────────────────────────────────────┘

When a request comes in, Node.js runs your handler synchronously until it hits something that involves waiting: reading a file, querying a database, making an HTTP call. At that point, Node.js hands that task off and moves on. It doesn't sit there. When the task completes, the callback (or resolved promise) gets placed in a queue, and the event loop picks it up on its next iteration.

Your single thread is almost never blocked. It's always either running code or waiting for the event loop to give it the next thing to run.

Here's what most explanations skip: Node.js delegates blocking operations to libuv, a C library underneath Node.js. libuv maintains a thread pool (4 threads by default) for tasks that are genuinely blocking at the OS level: file system reads, DNS lookups, cryptographic operations.

┌─────────────────────────────────────────────────────┐ │ Node.js Process │ │ │ │ ┌─────────────────────┐ ┌─────────────────────┐ │ │ │ V8 (JS Engine) │ │ libuv │ │ │ │ Single Thread │ │ Thread Pool │ │ │ │ │ │ │ │ │ │ req comes in ─────────► fs.readFile() │ │ │ │ runs your handler │ │ DNS resolve │ │ │ │ hits fs.readFile() │ │ crypto.pbkdf2() │ │ │ │ hands it to libuv─────► │ │ │ │ continues next req │ │ ↓ completes │ │ │ │ ◄── callback queued│◄──── result ready │ │ │ └─────────────────────┘ └─────────────────────┘ │ └─────────────────────────────────────────────────────┘

Network I/O (like outgoing HTTP requests or database queries over TCP) doesn't use the thread pool at all. It uses the OS's non-blocking I/O system calls directly, which are event-driven by design. libuv just listens for the completion events.

When three requests arrive at your Express server nearly simultaneously, here's what actually happens:

Time ──────────────────────────────────────────────► Request A arrives: handler runs, hits DB query, hands off to libuv │ Request B arrives: handler runs, hits DB query, hands off to libuv │ Request C arrives: handler runs, returns static data immediately ──► Response C sent │ DB result for A ready ──► callback runs ──► Response A sent │ DB result for B ready ──► callback runs ──► Response B sent

The single thread is rarely idle. It's always processing whichever callback is next in the queue. The I/O waits happen in parallel in the background, managed by libuv and the OS. When they complete, results get queued and processed one by one.

Node.js is excellent for I/O-bound work: REST APIs, real-time apps, proxies, anything that involves waiting for databases, external services, or file reads. The single thread is almost never the bottleneck because it spends almost no time blocked.

The ceiling is CPU-bound work: image processing, video encoding, large JSON transformations, complex calculations. When your JavaScript is doing heavy computation, it's occupying the single thread. Every other request waits. This is why you don't write image compression logic directly in a Node.js API handler. You offload it to a worker thread or a separate service.

I/O-bound (Node.js thrives): CPU-bound (Node.js bottlenecks): read file ──► wait ──► done compress image fetch DB ──► wait ──► done ↕ call API ──► wait ──► done (single thread stuck here) next request waits... Thread barely runs during wait. Thread maxed out the whole time.

Node.js exposes worker_threads for CPU-intensive tasks if you need to keep things in-process, but the cleaner move is usually a dedicated worker service.

The single-threaded model isn't a limitation. It's a deliberate design that eliminates a massive class of concurrency bugs (race conditions, deadlocks, shared mutable state between threads) while handling I/O-heavy workloads with very low overhead. Once you understand what the event loop is actually doing, Node.js stops feeling like magic and starts feeling like a very well-engineered trade-off.

Related posts based on tags, category, and projects

The event loop is what lets Node.js handle thousands of concurrent operations on a single thread. This post builds that mental model from scratch, covering the call stack, task queue, and how async operations move through the system.

Node.js isn't fast because of raw processing power. It's fast because it never waits around when there's work to do. This post covers the architectural decisions that make Node.js well-suited for high-concurrency web applications.

Blocking code makes your server wait, doing nothing, until an operation finishes. Non-blocking code hands the work off and keeps going. In a single-threaded environment like Node.js, that distinction determines how your server performs under real load.

Middleware is code that runs between a request arriving and a response being sent. In Express, every middleware function in the chain gets a chance to inspect, modify, or stop a request. This post covers what middleware is, how `next()` controls the flow, and where it gets used in real applications.