Learn how to build production-ready idempotent APIs that prevent duplicate operations, handle race conditions, and maintain data consistency even when clients retry failed requests.

Picture this: You're buying concert tickets online. You click "Purchase" the page freezes. Did it work? You're not sure, so you click again. And again. The next morning, you wake up to three separate charges for the same concert. Three tickets you don't need. Three painful refund requests ahead.

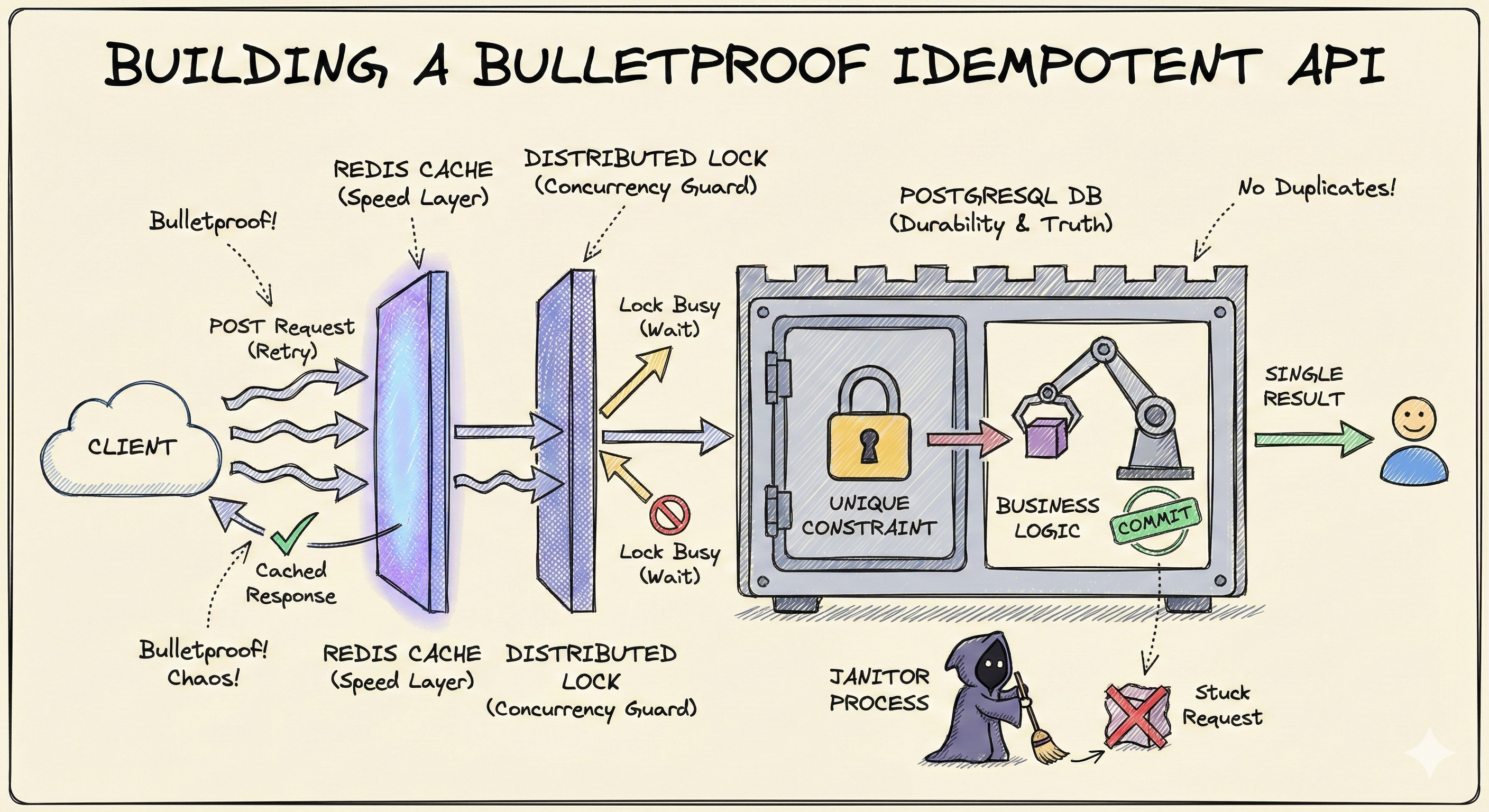

This nightmare scenario is exactly what idempotent API design prevents. But here's the thing: building a truly bulletproof idempotent system isn't just about checking if a request has been seen before. It's about handling the chaos of distributed systems network failures, server crashes, race conditions, and the thousand ways things can go wrong when two requests arrive at the exact same millisecond.

In this guide, we'll build an idempotent API system from the ground up, starting with the fundamentals and progressing to production-grade solutions that handle every edge case. Whether you're just learning about idempotency or architecting systems that process millions of transactions, you'll find actionable insights here.

Let's start with the simplest possible definition: An idempotent operation produces the same result no matter how many times you perform it.

Think of a light switch. Press it once the light turns on. Press it a thousand times while it's already on the light stays on. That's idempotency. The action can be repeated safely without changing the outcome after the first execution.

In the context of APIs, idempotency means that sending the same request multiple times has the same effect as sending it once. The first request might create a resource or charge a payment. Every subsequent identical request recognizes "I've already done this" and returns the same result without duplicating the operation.

Network requests fail. It's not a question of if, but when. A mobile client loses signal mid-request. A load balancer times out. A server crashes after processing but before responding. From the client's perspective, these all look the same: no response received.

The responsible thing for a client to do? Retry the request.

But here's where things get dangerous. If your API isn't idempotent, that retry creates a duplicate operation:

Users notice. Support tickets flood in. Revenue gets lost in refunds. Database integrity breaks down.

Some HTTP methods are naturally idempotent by design:

GET Reading data doesn't change anything, so it's inherently safe to repeat.

PUT "Set resource X to state Y" is idempotent because setting something to the same state multiple times doesn't change the outcome.

DELETE Deleting something that's already deleted leaves you in the same state: it doesn't exist.

But POST the most common method for creating resources and processing payments? Not idempotent by default. Send a POST request five times, and you typically create five separate resources.

This is the operation we need to make smart about. This is where idempotency keys come in.

The most widely adopted solution to the POST problem is elegantly simple: let the client tell the server "this specific request should only happen once."

This is done through an idempotency key a unique identifier (typically a UUID) that the client generates and includes in a request header. Here's what it looks like:

POST /api/orders Idempotency-Key: 7f3e9c2a-8d1b-4c5a-b9e6-1f0a2c3d4e5f Content-Type: application/json { "items": [...], "total": 299.99 }

The contract is simple:

Here's the basic flow visualized:

┌──────────┐ │ Client │ └────┬─────┘ │ POST /orders │ Idempotency-Key: abc-123 ▼ ┌──────────────────┐ │ Server │──────► Check: Key exists? └──────────────────┘ │ │ ┌─────────────┴──────────────┐ │ │ YES NO │ │ ▼ ▼ ┌────────────────┐ ┌────────────────┐ │ Return Cached │ │ Process Request│ │ Response │ │ Save Result │ └────────────────┘ └────────────────┘

The key insight is that retry logic is now safe. The client can retry with confidence because:

For first-time builders, this might seem like enough. Check if key exists, process if not, done. But production systems face three critical challenges that this simple approach doesn't handle:

Let's solve each one.

Before we can detect duplicates, we need to answer: where do we store these keys?

Every idempotency key entry needs at minimum:

| Field | Type | Description |

|---|---|---|

idempotency_key | UUID (Unique) | The key sent by the client |

status | ENUM | started, executing, completed, failed |

response_body | JSONB | The final result (null if still processing) |

created_at | Timestamp | Used for cleanup and monitoring |

The status field is critical it tells us whether a request is done or still being worked on. The response_body stores the complete API response so we can replay it exactly for duplicate requests.

This is one of the most important architectural decisions you'll make. Let's compare both options:

Redis The Speed Champion

┌──────────────────────────────────┐ │ Redis (In-Memory) │ │ │ │ ✓ Sub-millisecond lookups │ │ ✓ Built-in TTL for auto-cleanup │ │ ✓ Atomic operations (SET NX) │ │ │ │ ✗ Data can be lost on crash │ │ ✗ Another service to manage │ └──────────────────────────────────┘

PostgreSQL The Durability Champion

┌──────────────────────────────────┐ │ PostgreSQL (Disk-Based) │ │ │ │ ✓ ACID guarantees │ │ ✓ Data survives crashes │ │ ✓ Already in your stack │ │ │ │ ✗ Slower than in-memory │ │ ✗ Manual cleanup required │ └──────────────────────────────────┘

Here's the strategic insight that powers production systems: you don't have to choose. Use both, each for what it does best.

┌─────────────┐ │ Request │ └──────┬──────┘ │ ▼ ┌──────────────┐ │ Redis │ ◄──── Fast duplicate detection (cache layer) │ (Lookup) │ └──────┬───────┘ │ Key not found ▼ ┌──────────────┐ │ PostgreSQL │ ◄──── Source of truth (persistent layer) │ (Transaction)│ └──────┬───────┘ │ Save result ▼ ┌──────────────┐ │ Update │ ◄──── Cache for next duplicate │ Redis │ └──────────────┘

This layered defense strategy means:

Here's a mistake many developers make: they update Redis first, then the database. This creates a timing window where the system can fail in an inconsistent state.

The Wrong Sequence:

1. Save to Redis ← Server crashes here 2. Save to PostgreSQL ← Never happens

Result: Redis says "already processed" but there's no record in the database. The operation never actually completed, but future requests think it did.

The Correct Sequence:

┌─────────────────────────────┐ │ START TRANSACTION │ └────────────┬────────────────┘ │ ┌────────────▼────────────────┐ │ Check processed_requests │ │ table for key │ └────────────┬────────────────┘ │ ┌─────────┴──────────┐ │ │ Exists Not Exists │ │ ▼ ▼ ┌───────────────┐ ┌───────────────┐ │ ROLLBACK │ │ Insert record │ │ Return cached │ │ status: exec │ └───────────────┘ └───────┬───────┘ │ ▼ ┌───────────────┐ │ Execute Logic │ │ (Create Order)│ └───────┬───────┘ │ ▼ ┌───────────────┐ │ Update status │ │ Save response │ └───────┬───────┘ │ ▼ ┌───────────────┐ │ COMMIT │ └───────┬───────┘ │ ▼ ┌───────────────┐ │ Update Redis │ │ (Cache it) │ └───────────────┘

Why this order is critical:

Here's where things get interesting. Two identical requests hit your server at the exact same millisecond. Both check Redis neither sees a cached result. Both start a database transaction. What stops both from succeeding?

This is the atomicity challenge. Let's visualize the problem:

Time ────────────────────────────────────────► Request A: Check Redis ────► None found ────► Start DB Transaction ────► ? Request B: Check Redis ────► None found ────► Start DB Transaction ────► ? │ │ └────── Both happen at T0 ─────────┘

The answer is elegantly simple: let the database enforce uniqueness.

In PostgreSQL, add a UNIQUE constraint on the idempotency_key column:

sqlCREATE TABLE processed_requests ( id SERIAL PRIMARY KEY, idempotency_key UUID UNIQUE NOT NULL, status VARCHAR(20) NOT NULL, response_body JSONB, version INTEGER NOT NULL DEFAULT 1, created_at TIMESTAMP DEFAULT NOW() ); CREATE INDEX idx_idempotency_key ON processed_requests(idempotency_key);

Now when two transactions race to insert:

Request A: BEGIN → INSERT → SUCCESS → Process Order → COMMIT Request B: BEGIN → INSERT → ERROR 23505 (Unique Violation) → ROLLBACK

Request B receives a unique constraint violation error. In your application code, this is your signal:

javascriptLoading syntax highlighter...

This is the first wall of defense against race conditions. The database guarantees atomicity only one transaction can claim the key.

The unique constraint handles simultaneous arrivals, but there's a subtler problem: what if Request B arrives while Request A is still processing?

Scenario: Request A is calling a slow third-party payment API. This takes 8 seconds. Request B arrives at second 3 with the same idempotency key. Request A hasn't finished yet, so there's no response_body in the database. What should Request B do?

Option 1: Fast-Fail (409 Conflict)

Request B → Check DB → Status: "executing" → Return 409 Conflict

Simplest approach, but terrible UX. The client has to implement retry logic with exponential backoff.

Option 2: Transparent Wait

Request B → Check DB → Status: "executing" → Wait → Poll → Return result

Better UX Request B waits for Request A to finish, then returns the same result. But this has a hidden danger: request timeouts if the original request takes too long.

Option 3: Transparent Wait with Graceful Timeout (The Production Approach)

Request B → Poll briefly → Still processing? → Return 202 Accepted with status URL Request B → Poll briefly → Completed? → Return result

This is the production-grade solution. It combines Option 2's transparent waiting with timeout handling. We'll implement this by building Option 2 first (distributed locks + polling), then adding graceful timeout degradation.

To make Option 2 work safely, we need a distributed lock. This prevents multiple processes from starting work on the same key simultaneously. Enter Redis SET NX (Set if Not eXists):

SET lock:idempotency_key:<uuid> 1 NX EX 30

This command says: "Set this key to value 1, only if it doesn't already exist, and expire it after 30 seconds."

Here's the complete flow with locking:

┌─────────────┐ │ Request A │ └──────┬──────┘ │ ▼ Try lock in Redis SET lock:abc-123 NX │ ▼ SUCCESS ┌───────────────┐ │ Process Order │ │ (8 seconds) │ └───────┬───────┘ │ ▼ Save to DB & Release lock ┌─────────────┐ │ Request B │ (arrives at second 3) └──────┬──────┘ │ ▼ Try lock in Redis SET lock:abc-123 NX │ ▼ FAILED (lock exists) ┌─────────────┐ │ Wait & Poll │ ◄─┐ └──────┬──────┘ │ │ │ ▼ Lock still held Sleep 200ms ──────┘ │ ▼ Lock released (Request A done) Query DB for result Return cached response

Critical mistake to avoid: Never use a blocking loop in Node.js. This will freeze the entire event loop:

javascript// WRONG - Blocks the event loop while (!lockAcquired) { lockAcquired = await tryAcquireLock(); // This blocks all other requests }

The correct pattern uses non-blocking delays:

javascriptLoading syntax highlighter...

This pattern:

We've built the foundation with distributed locks and polling. Now we need to handle the reality of HTTP connection timeouts. Most infrastructure has hard limits:

If Request A takes 45 seconds to process, and Request B has been polling for 39 seconds, the connection might get severed before Request A finishes.

This is the graceful degradation piece that completes Option 3. Instead of letting Request B's connection hang until it times out, we proactively hand off to a status-based architecture:

Phase 1 Initial Request

POST /api/orders Idempotency-Key: abc-123 ← 202 Accepted { "message": "Your order is being processed", "statusUrl": "/api/orders/status/abc-123", "idempotencyKey": "abc-123" }

Phase 2 Client Polls Status Endpoint

GET /api/orders/status/abc-123 ← 200 OK { "status": "processing" } ... wait 2 seconds ... GET /api/orders/status/abc-123 ← 200 OK { "status": "completed", "result": { "orderId": "order-789", "total": 299.99 } }

This pattern is how services like Stripe handle long-running operations. The benefit:

Here's the nightmare scenario: Request A acquires the lock, sets status: executing in the database, and then the server crashes. Power outage. Kernel panic. Docker container killed.

Request B arrives later and sees:

status: executing (stuck forever)Without intervention, this key is permanently poisoned. All future requests will see "still processing" even though nothing is processing.

The fix is a janitor process a background worker that periodically scans for stuck requests:

javascriptLoading syntax highlighter...

Why both steps?

Alternative strategies:

This ensures:

There's one more subtle race condition: what if the janitor tries to mark a request as "failed" at the exact same moment the original Request A (which we thought was dead) suddenly completes?

Both try to update the same database row. Without protection, one will overwrite the other. If the janitor wins, we might mark a successful operation as "failed."

Add a version column to the processed_requests table. Every update increments it:

sqlUPDATE processed_requests SET status = 'completed', response_body = $1, version = version + 1 WHERE idempotency_key = $2 AND version = $3 -- Only update if version matches RETURNING version;

The sequence:

version: 1, status: executingversion: 1, status: executingWHERE version = 1 → Success → version becomes 2WHERE version = 1 → Fails → no rows affectedThe janitor's update silently fails (updates 0 rows). In your code, check the affected row count:

javascriptconst result = await db.query( "UPDATE processed_requests SET status = $1, version = version + 1 WHERE idempotency_key = $2 AND version = $3", ["failed", key, expectedVersion], ); if (result.rowCount === 0) { // Version mismatch - someone else updated this first console.log("Optimistic lock conflict - request already updated"); }

This is called optimistic locking because it optimistically assumes conflicts are rare, but gracefully handles them when they occur.

Let's bring everything together. Here's the full flow of a production-grade idempotent API:

┌──────────────┐ │ Client │ │ Generate UUID│ └──────┬───────┘ │ POST /orders │ Idempotency-Key: abc-123 ▼ ┌─────────────────────────────────────────────┐ │ API Server (Node.js) │ │ │ │ 1. Extract idempotency key from header │ │ 2. Check Redis cache │ │ └─► If found → return cached response │ │ + header: Idempotent-Replay: true │ 3. Try to acquire distributed lock (Redis) │ │ └─► SET lock:abc-123 1 NX EX 30 │ │ │ │ ┌────── Lock Acquired ──────┬──── Lock Failed ──────┐ │ │ │ │ │ ▼ ▼ │ │ Start DB Transaction Wait & Poll (5s max) │ │ ├─ Check if key exists │ │ │ ├─ Insert with version=1 │ │ │ ├─ Execute business logic └─► Timeout reached │ │ ├─ Save response Return 202 Accepted │ │ ├─ Update status=completed + statusUrl │ │ └─ COMMIT │ │ │ │ │ ├─► Update Redis cache (TTL: 24h) │ │ └─► Release distributed lock │ │ │ └──────────────────────────────────────────────────────┘ │ ▼ ┌──────────────┐ ┌───────────────┐ │ Redis │ │ PostgreSQL │ │ │ │ │ │ • Lock keys │ │ • Source of │ │ • Cache layer│ │ truth │ │ • TTL: 30s │ │ • Unique │ │ (locks) │ │ constraint │ │ • TTL: 24h │ │ • Versioning │ │ (responses)│ │ │ └──────────────┘ └───────────────┘ ┌─────────────────────────────────────────────┐ │ Background Janitor Process │ │ (Runs every 5 minutes) │ │ │ │ 1. Find requests stuck in "executing" │ │ 2. Check if created_at > 5 minutes ago │ │ 3. Attempt to mark as failed (with version)│ │ 4. If conflict → another process handled it│ │ 5. Log to dead letter queue for review │ └─────────────────────────────────────────────┘

Here's your roadmap to building this system step-by-step:

processed_requests table with unique constraint on idempotency_keyversion column for optimistic lockingprocessed_requests tableGET /api/orders/status/:keyIdempotent-Replay: true header for cached responsesShort answer: 24 hours for most use cases.

Reasoning: Idempotency keys protect against retries due to network issues, which typically happen within seconds or minutes. Storing them for 24 hours provides a generous buffer for offline mobile clients or batch retry jobs.

For financial operations, consider longer retention (30-90 days) for audit trails, even if the cache TTL is shorter.

This is a client bug, not a server problem. Your API should trust that clients generate unique keys correctly. However, you can add validation:

javascriptLoading syntax highlighter...

This catches the error early and provides clear feedback to the client.

Not necessarily. Prioritize:

For low-stakes operations (like logging analytics events), the complexity might not be worth it.

If your operation involves multiple steps (charge payment, update inventory, send email), wrap everything in a database transaction. If any step fails, roll back the transaction and save status: failed with the error details.

For operations that can't be rolled back (like third-party API calls), use the idempotent wrapper pattern:

Services like Stripe provide their own idempotency keys for this reason.

Pitfall #1: Not Setting TTL on Redis Lock Keys

Without TTL, a crash leaves the lock permanently acquired. Always use EX flag: SET lock:key 1 NX EX 30

Pitfall #2: Releasing Locks Too Early If you release the lock before committing the database transaction, another request can slip through and see incomplete data. Release lock after the database commit succeeds.

Pitfall #3: Storing Sensitive Data in Response Cache If your API returns credit card numbers or passwords (please don't), don't cache them in Redis. Either filter sensitive fields or skip caching entirely for sensitive endpoints.

Pitfall #4: Not Handling Lock Acquisition Failures

If Redis is down and SET NX fails, don't crash. Fall back to database-only mode (slower but functional).

Pitfall #5: Using Blocking Sleep in Node.js

Always use setTimeout with promises for async delays. Never block the event loop.

javascriptLoading syntax highlighter...

javascriptLoading syntax highlighter...

javascriptLoading syntax highlighter...

javascriptLoading syntax highlighter...

Building a production-grade idempotent API system isn't just about checking if a key exists. It's about handling the chaos of distributed systems with defensive layers:

The beauty of this architecture is that each layer adds protection without requiring the previous layer to be perfect. Redis can fail Postgres catches it. Locks can timeout status polling handles it. Servers can crash the janitor recovers.

This is how systems like Stripe, AWS, and GitHub handle millions of payment transactions, API calls, and git operations every day without duplicate charges or corrupted data. Now you have the blueprint to build the same into your own APIs.

Related posts based on tags, category, and projects

Linux exposes its entire nervous system through the file system. This is what I found when I stopped treating it as a place to store files and started treating it as documentation.

Callbacks are one of the oldest and most important patterns in JavaScript. This post explains what callback functions are, why JavaScript uses them so heavily in asynchronous code, how passing functions as values works, where callbacks show up in real applications, and why nested callbacks eventually became a problem.

Async/await gives JavaScript developers a cleaner way to work with asynchronous code without abandoning promises. This blog explains why it was introduced, how `async` functions and `await` actually behave, how to handle errors properly, and where it fits compared to plain promise chains.

A deep discussion into the Transmission Control Protocol (TCP), exploring its architectural origins, the mechanics of the 3-way handshake, and the robust reliability features that underpin the modern internet.

try {

await db.query(

"INSERT INTO processed_requests (idempotency_key, status) VALUES ($1, $2)",

[idempotencyKey, "executing"],

);

// Process the request...

} catch (error) {

if (error.code === "23505") {

// Unique violation

// Another request is processing this key

const result = await db.query(

"SELECT response_body FROM processed_requests WHERE idempotency_key = $1",

[idempotencyKey],

);

return result.rows[0].response_body;

}

throw error;

}async function waitForLock(idempotencyKey, maxWaitMs = 5000) {

const startTime = Date.now();

const pollIntervalMs = 200;

while (Date.now() - startTime < maxWaitMs) {

// Check if the request is complete

const result = await db.query(

"SELECT status, response_body FROM processed_requests WHERE idempotency_key = $1",

[idempotencyKey],

);

if (result.rows[0]?.status === "completed") {

return result.rows[0].response_body;

}

// Non-blocking delay

await new Promise((resolve) => setTimeout(resolve, pollIntervalMs));

}

// Timeout reached - graceful handoff

return {

status: 202,

message: "Processing",

statusUrl: `/api/orders/status/${idempotencyKey}`,

};

}// Runs every 5 minutes

async function cleanupStuckRequests() {

const fiveMinutesAgo = new Date(Date.now() - 5 * 60 * 1000);

const stuckRequests = await db.query(

`

SELECT idempotency_key, created_at

FROM processed_requests

WHERE status = 'executing'

AND created_at < $1

`,

[fiveMinutesAgo],

);

for (const request of stuckRequests.rows) {

console.warn("Found stuck request:", request.idempotency_key);

// Step 1: Mark as failed in the database

// This ensures future requests with this key receive a proper error response

await db.query(

`

UPDATE processed_requests

SET status = 'failed',

response_body = $1

WHERE idempotency_key = $2

`,

[JSON.stringify({ error: "Request timed out" }), request.idempotency_key],

);

// Step 2: Send to dead letter queue for ops team to investigate

// This creates an audit trail of failures for manual review

await moveToDeadLetterQueue(request);

}

}// Hash the request body and compare it to stored hash

const requestHash = crypto

.createHash("sha256")

.update(JSON.stringify(req.body))

.digest("hex");

const storedRequest = await getStoredRequest(idempotencyKey);

if (storedRequest && storedRequest.requestHash !== requestHash) {

return res.status(400).json({

error: "Idempotency key reused with different request body",

});

}const key = generateUUID();

// First request

const response1 = await makeRequest('/orders', { items: [...] }, { 'Idempotency-Key': key });

expect(response1.status).toBe(201);

// Duplicate request

const response2 = await makeRequest('/orders', { items: [...] }, { 'Idempotency-Key': key });

expect(response2.status).toBe(200);

expect(response2.body).toEqual(response1.body);

expect(response2.headers['idempotent-replay']).toBe('true');const key = generateUUID();

// Send two identical requests simultaneously

const [response1, response2] = await Promise.all([

makeRequest('/orders', { items: [...] }, { 'Idempotency-Key': key }),

makeRequest('/orders', { items: [...] }, { 'Idempotency-Key': key })

]);

// Both should succeed with same response

expect(response1.body.orderId).toBe(response2.body.orderId);

// Verify only ONE order was created in database

const orders = await db.query('SELECT COUNT(*) FROM orders WHERE ...');

expect(orders.rows[0].count).toBe(1);const key = generateUUID();

// Mock slow processing (10 seconds)

mockSlowOrder();

const response = await makeRequest('/orders', { items: [...] }, { 'Idempotency-Key': key });

// Should receive 202 Accepted after polling timeout

expect(response.status).toBe(202);

expect(response.body.statusUrl).toMatch(/\/orders\/status\//);

// Poll status endpoint

await sleep(11000);

const statusResponse = await makeRequest(response.body.statusUrl);

expect(statusResponse.status).toBe(200);

expect(statusResponse.body.status).toBe('completed');// Manually insert a stuck request

await db.query(

`

INSERT INTO processed_requests (idempotency_key, status, created_at)

VALUES ($1, 'executing', NOW() - INTERVAL '10 minutes')

`,

[testKey],

);

// Run janitor

await cleanupStuckRequests();

// Verify it was marked as failed

const result = await db.query(

"SELECT status FROM processed_requests WHERE idempotency_key = $1",

[testKey],

);

expect(result.rows[0].status).toBe("failed");